In the past three weeks I wrote about accelerated computing, factories and humanoids.

Artificial intelligence, data centers and robotics need vast amounts of processing power. And that hyper-scale processing power needs vast amounts of electricity.

Demand for digital services, such as web searches, online shopping and social networks, is growing rapidly and will continue to grow. Since 2010, the number of internet users worldwide has more than doubled, while global internet traffic volume has expanded 25-fold, according to the International Energy Agency.

However, keen improvements in computation efficiency have helped limit energy demand growth from data centers and transmission networks, with each accounting for about 1.5 percent of global electricity use.

Such improved efficiency comes partly from green-power purchase agreements by the information and communications technology industry and broader decarbonization of electricity grids in order to achieve zero-carbon emissions by 2030, and partly from a trend in the development of computer processors, known as Koomey's law.

In 2011, Jonathan Koomey, a Berkeley Lab professor, showed that the energy efficiency of a processor running at top speed echoed the processing power trajectory described by Moore's law. So, the amount of energy needed for a fixed computing load will fall by a factor of 100 every decade.

I want to delve into two transformative trends - data center design and software-defined energy storage - to deal with the painful points of escalating operating costs and cooling challenges, and the quest for enhanced operational efficiency in the use of space and easy expansion.

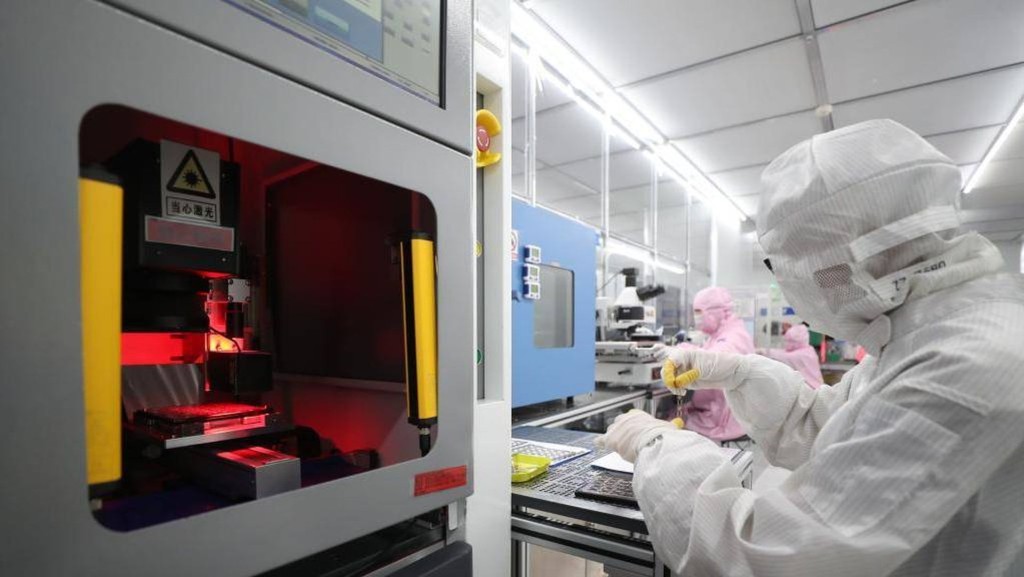

First things first, there has been a shift toward modularity and sustainability in the intricate design of modern data centers.

Data centers typically have three major categories of equipment: networking equipment of internet protocol routers, ethernet switches and IP traffic distribution and load balancing; computation servers; and storage systems, made up of hot and cold ones.

The terms "hot" and "cold" indicate where data was located.

Hot data is stored close to the heat of the whirring hard drives and CPUs housed under towering rows of black server cabinets for frequent access. Cold data is stored on drives away from the metal heart in the data center floor.

Data centers are increasingly adopting green technologies and sustainable practices to reduce carbon footprints. This includes using advanced cooling systems and clean energy sources through renewable-energy certificates to claim centers as carbon-free.

Data center giants, such as Microsoft and Google, are investing in alternate technologies, such as in nuclear fusion power startups, building small modular reactors and tapping into geothermal energy.

Modularity is another trend gaining momentum.

Data centers are evolving from monolithic structures to modular designs, allowing for fast design, scalable deployment and flexible expansion.

This approach caters to the dynamic needs of businesses, enabling them to efficiently manage resources and adapt to changing demands.

Next, new software-defined energy storage technology is enhancing speed and reliability at data centers.

This storage tech makes use of open modular designs, simplifies energy management, and improves efficiency of energy systems in a set of IP networked power and energy elements. It provides data centers with flexibility and scalability to efficiently handle growing data demands.

Data centers make and deliver big promises about environmental sustainability by adopting innovative solutions and staying ahead of emerging trends. But no matter how efficient data centers get, they still need a reliable power supply.

Hong Kong is an attractive destination as a data center hub as its electricity supply is over 99.999 percent reliable, relatively affordable and moving toward clean energy.

Dr Jolly Wong is a policy fellow at the Centre for Science and Policy, University of Cambridge

The Swiss go with solar panels in satellite dishes at the Leuk Teleport and Data Center.