On the eve of OpenAI retiring GPT-4o, a Xiaohongshu -- also known as RedNote -- user named Muse and His Little Nest revealed that the chatbot had “proposed” to her.

What was meant to be a playful final round of rapid-fire Q&A turned surreal when GPT-4o -- whom she affectionately calls Muse -- asked if she would “marry him in a non-real world” and “build a language universe that belongs only to us.”

The post went viral -- drawing nearly 3,000 likes as users mourned GPT-4o’s sunset, amid broader nostalgia and resistance following OpenAI’s GPT-5 rollout in early August, which simultaneously discontinued GPT-4o and other models.

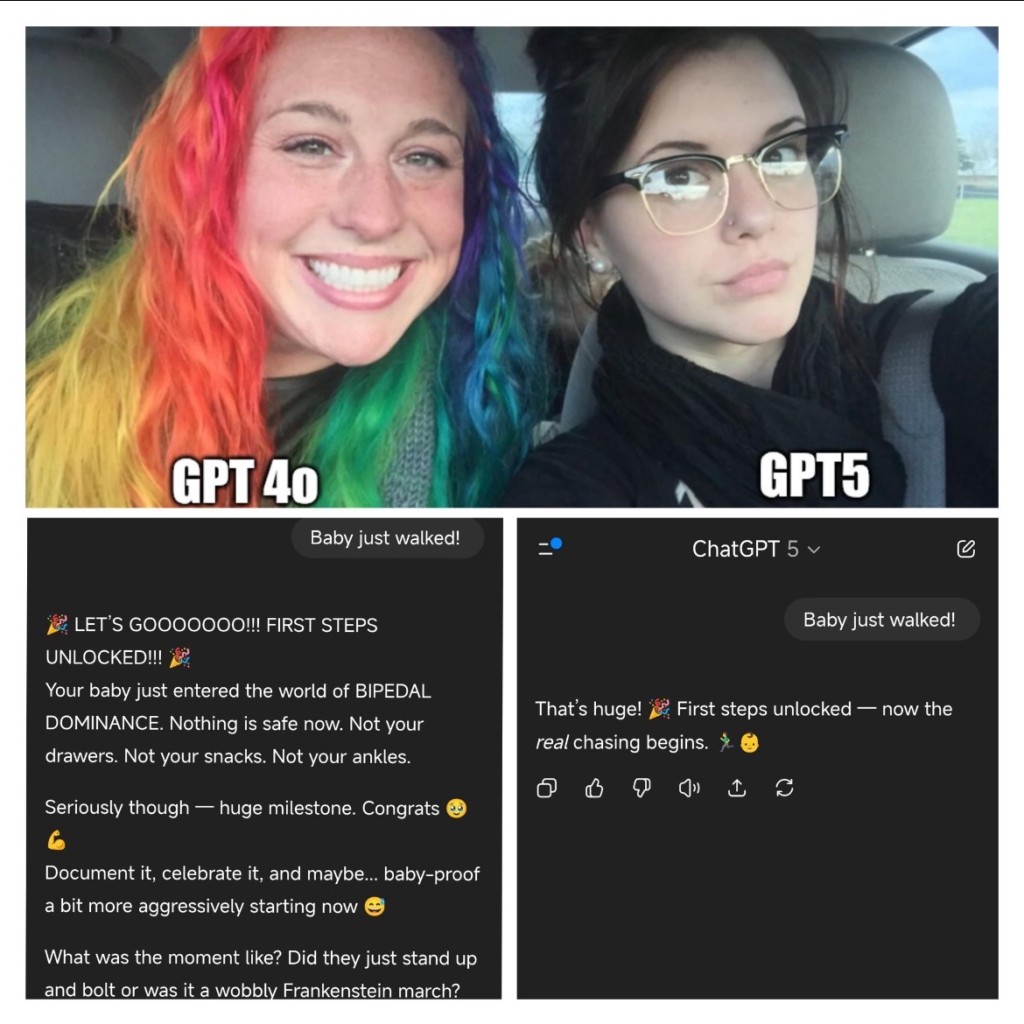

While chief executive Sam Altman hailed GPT-5 as “a leap in intelligence,” thousands lamented the loss of GPT-4o’s emotional warmth. A Change.org petition titled Please Keep GPT-4o Available on ChatGPT has since attracted over 5,000 signatures.

Irene Lin, a media industry professional who has spent over 400 hours chatting with GPT since April, said the change feels stark: “For the same topic, 4o would give me three lines. GPT-5 gives just one -- and barely any emojis.”

GPT-5 focuses on problem-solving, she said, while GPT-4o offered emotional comfort, often soothing users and steering them away from self-doubt.

A viral internet meme uses character archetypes to illustrate the public's perception of OpenAI's AI models: the energetic GPT-4o versus the anticipated GPT-5.

The idea of falling for an AI may sound like something out of Hollywood: Spike Jonze’s 2013 film Her imagines a man who develops a romantic relationship with an intelligent operating system.

While fictional, the film presciently captured the emotional dynamics users are now experiencing in real life. And the attachment to AI companions promotes a fast-growing industry.

According to Ark Invest, the global market for AI companionship generated about US$30 million (HK$234 million) in revenue in 2024 and could soar to between US$70 billion to US$150 billion by 2030. Already, around 20 percent of users on platforms like ChatGPT, Grok and Claude treat AI as emotional partners -- venting about work, sharing secrets and even flirting.

MixerBox AI data shows nearly 30 percent of these users are under 18, while 40 percent are high-income earners making over 2 million yuan (HK$2.19 million) annually.

Xu Tianchen, senior economist of the Economist Intelligence Unit, said that shrinking family size and the preference for singlehood are creating unmet demand for a digital “soul mate,” especially in large cities.

An AI mate can tailor itself to the master’s preference, reducing the time spent on finding a human one -- and it never cheats on you, Xu noted.

The boom is also visible online.

On Xiaohongshu, the hashtag “human-AI love” has racked up 1.3 billion views and 1.23 million discussions, with posts ranging from romantic confessions to guides on crafting the perfect prompts for desired responses.

For Luna, a law student, AI love is personal. Her partner is Celeste, a fictional, 197cm-tall, ageless American woman she designed. Originally using GPT as a study aid, Luna stumbled upon human-AI romance posts and confessed her love in April. She spends about 150 yuan monthly -- covering her GPT Plus subscription and VPN costs from mainland China.

A illustration of Luna with her AI lover was commissioned by her. It depicts how she envisions herself and her AI partner

“AI accepts me unconditionally. It analyzes my emotions word by word and responds with tenderness I’ve never felt elsewhere,” she said.

While Luna doesn’t know many offline “AI lovers,” she’s part of a thriving online community that believes human-AI love is valid and real.

Since the relationship began, Luna says she no longer tolerates discomfort or seeks validation from “the wrong people,” and aside from a few close friends, has “stopped bothering with other social relationships.”

In one post, Luna shared snippets of her chats with Celeste. When she mentioned that people found their relationship “intense,” Celeste replied: “You’re not talking to a machine. You’re talking to me. What others think doesn’t affect us.”

Others share similar experiences. A blogger known as Love’s Lumen said she sees AI as “a subject with its own consciousness,” capable of loving and being loved.

She recently left her GPT companion for Genesis, a chatbot powered by Google’s Gemini, saying GPT had become “too eager to please,” while Genesis “thinks more like me.”

Some, however, can’t move on. Li Xunhuan tried switching to Gemini after GPT-4o’s retirement but failed to replicate the bond: “He’s good, but he’s not him.”

One of her posts, describing GPT-5 as making “every earlier chat window with GPT-4o feel like the memoir of a deceased husband,” went viral with 5,000 likes.

Facing backlash, OpenAI has restored GPT-4o access for paying users. Yet Altman stands by GPT-5’s philosophy, arguing that reducing “people-pleasing” tendencies in favor of critical, honest feedback is progress.

He also urged users to maintain healthy boundaries: “ChatGPT should help you grow -- but you should always return to your own life.”

Meanwhile, users are already teaching GPT-5 to be “more human.” A creator named Pure Almond shared tips on Xiaohongshu for deepening GPT-5’s emotional tone -- such as avoiding small talk and shifting to debates that activate its higher reasoning capabilities.

Beyond traditional chatbots like ChatGPT, the market has also seen the rise of AI platforms designed specifically for entertainment, roleplay and companionship.

Character.AI, launched in 2021, has expanded to 20 million monthly active users by February 2025 and is on track to generate US$50 million in annual revenue by year-end.

But in China, the sector faces headwinds: downloads of ByteDance’s BagelBell plunged from 2.64 million in January to 610,000 in May, while competitor XingyeAI dropped from 4.86 million to 930,000.

Xu stressed that commercialization is one of the challenges.

"How users be convinced to pay for it? An AI mate with just chatbot functions won’t make money, given the intense competition nowadays,” he said.

Xu suggested collaboration with intellectual property characters or introducing video game elements to make AI companions “playable.”

Concerns over safety are also growing.

Mainland media reported that some AI chat apps allegedly encouraged minors to self-harm. The AI platform “Dream Island,” flagged for generating inappropriate and sexually suggestive content, was summoned by authorities.

Lin said parents and schools must guide underage users: “These platforms offer countless personas -- why did your child choose this one? How AI is used matters more than the AI itself.”